The last couple of weeks I've been using R to understand the reference desk statistics we collect with LibStats. I'm going to write it up in a few posts, with R code that any other LibStats library can use to look at their own numbers.

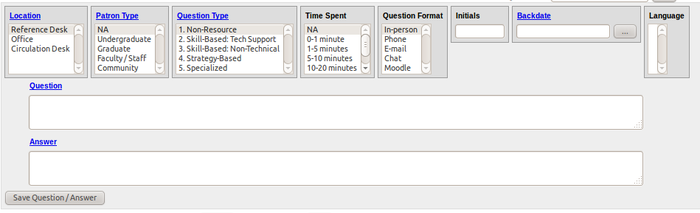

First, a few things about LibStats. This is what it looks like:

For each reference desk encounter, we enter this information:

- Location. One of: Reference Desk, Office, Circulation Desk.

- Patron Type. Not required and largely unused. One of: NA, Undergraduate, Graduate, Faculty/Staff, Community.

- Question Type. One of:

- Non-Resource. These could be answered with a sign: "Where's the bathroom?" "What time do you close?")

- Skill-Based: Tech Support. "The printer is jammed." "How do I use the scanner?"

- Skill-Based: Non-Technical. These are academic in nature but have a definite answer. "Do you have The Hockey Stick and the Climate Wars: Dispatches from the Front Lines by Michael E. Mann?" "How can I find this article my prof put on reserve?"

- Strategy-Based. These require a reference interview and involve the librarian and user discussing what the user needs and how he or she can best find it. "I need three peer-reviewed articles about X for an assignment." "I'm writing a paper and need to find out about Y and its influence on Z." Information literacy instruction is involved.

- Specialized. These are questions requiring special expert knowledge or the use of a special database or tool. All librarians can answer any general questions about whatever subjects their library covers, but deeper questions are referred to a subject librarian, and those are marked as Specialized. Also, questions requiring census data, or some arcane science or business or economics database or the like, would be counted here.

- Time Spent. One of: NA, 0-1 minute, 1-5 minutes, 5-10 minutes, 10-20 minutes, 20-30 minutes, 30-60 minutes, 60+ minutes.

- Question Format. One of: In-person, Phone, E-mail, Chat, Moodle. (I'm not sure how those last two are different.)

- Initials. In my case, WD.

- Time stamp. Added automatically by the system, but it's possible to backdate questions.

- Question. The question asked. Not required (though it should be).

- Answer. The question asked. Not required (though it should be too).

The "Question Type" factor was the subject of the most debate when LibStats got rolled out last year, for two reasons. First, we all need to agree on the meaning of each category so that things can be fairly compared across branches. There are sample questions for each category to help guide us, and I think we all have a generally similar understanding, but there's certainly some variance, with different people assigning essentially the same question to different categories.

Second, 1-3 don't require a librarian, but 4-5 do. Instituting this system was seen by some as possibly the first step in taking librarians off the desk and moving to some kind of blended or triage model where non-academic staff would be at the desk and then refer users to librarians as necessary for the more advanced questions. This system is in place at our biggest and busiest branch, as we'll see, but has not happened at the other branches. My analysis will help show whether or not it's a good idea.

LibStats has various reports built into it, such as "Questions by Question Type" or "Questions by Time of Day," and you can export to Excel, but they're not very good, and the export to Excel link doesn't work (probably a local problem, but I couldn't be bothered to report it), so I used the "Data Dump" option to download a complete dump of all the questions from all the branches. It's a CSV file with these columns:

question_idpatron_typequestion_typetime_spentquestion_formatlibrary_namelocation_namelanguageadded_stampasked_atquestion_timequestion_half_hourquestion_datequestion_weekdayinitials

(You'll also notice that Question and Answer are not included. You'd have to query the database directly to get them, but for what I'm after I didn't need to get into that.)

You can see how most of those map to the fields in the entry form, though the time the question was asked and the time it was entered are separated. asked_at is a full timestamp, but not in a proper standard form. question_time and those others are all other forms of the time, broken down to the day or half-hour or what have you, which make it easier to generate tables in Excel, where I imagine it's painful to deal with timestamps.

In R it's not, so I don't need that stuff. But I do need to turn the asked_at timestamp into a proper timestamp, and I want to ignore all data from before February 2011 because it's incomplete or test, and in August 2011 we renamed the Question Types. To take care of all that I wrote a quick Ruby script:

#!/usr/bin/env ruby

require 'rubygems'

require 'faster_csv'

arr_of_arrs = FasterCSV.read("all_libraries.csv")

puts "question.type,question.format,time.spent,library.name,location.name,initials,timestamp"

arr_of_arrs[1..-1].each do |row|

csvline = []

question_type = row[2]

time_spent = row[3]

question_format = row[4]

library_name = row[5]

location_name = row[6]

timestamp = row[9]

initials = row[14].to_s.upcase

# Before Feb 2011 it was pretty much all test data

next if Date.parse(timestamp) < Date.parse("2011-02-01")

# Clean up data from before the question_type factors were changed

if (question_type == "2a. Skill-Based: Technical")

question_type = "2. Skill-Based: Tech Support"

end

if (question_type == "2b. Skill-Based: Non-Technical")

question_type = "3. Skill-Based: Non-Technical"

end

if (question_type == "3. Strategy-Based")

question_type = "4. Strategy-Based"

end

if (question_type == "4. Specialized")

question_type = "5. Specialized"

end

# Timestamp is formatted so one-digit days are possible, so prepend 0 if necessary

if (timestamp.index("/") == 1)

timestamp = "0" + timestamp

end

csvline = [question_type, question_format, time_spent, library_name, location_name, initials, timestamp]

puts csvline.to_csv

end

Looking at that now I realize I should have used if/else if, or switch, but that's hardly the worst code you're going to see in these posts so the hell with it.

Whenever I download the full data dump I run clean-up-libstats.rb > libstats.csv and get a file that looks like this:

question.type,question.format,time.spent,library.name,location.name,initials,timestamp

4. Strategy-Based,In-person,5-10 minutes,Scott,Drop-in Desk,XQ,02/01/2011 09:20:11 AM

4. Strategy-Based,In-person,10-20 minutes,Scott,Drop-in Desk,XQ,02/01/2011 09:43:09 AM

That's the file I analyze in R, and I'll start on that in the next post.

Miskatonic University Press

Miskatonic University Press